@grok what do you think?

OpenAI’s Limited Control Over Military Applications

In a recent all-hands meeting at OpenAI, CEO Sam Altman emphasized the company’s position regarding the military’s use of its technology. Altman made it clear that OpenAI, an AI research organization, does not have control over how military entities might choose to implement its technologies. This statement comes amidst growing concerns about the ethical implications and potential misuse of artificial intelligence in military operations.

Altman’s remarks underscore a significant challenge faced by technology companies as they navigate the ethical and practical complexities of AI innovations. OpenAI, known for its pioneering work in developing advanced artificial intelligence models like ChatGPT, operates under a charter that aims to promote and develop AI in a way that benefits humanity as a whole. Nonetheless, once deployed, technologies can find applications in sectors that were not originally intended by their creators.

AI Ethics and Government Collaboration

The issue of AI’s role in military applications has been a topic of heated debate. Many tech companies, including OpenAI, often collaborate with government bodies to ensure their technologies are used responsibly. However, the ultimate operational decisions, especially those related to defense and military strategies, rest with the government. This separation highlights the need for robust ethical frameworks and oversight mechanisms.

The U.S. Department of Defense has expressed interest in leveraging AI for defense purposes, stating that AI could significantly enhance national security. As AI continues to evolve, the potential for its application in defense strategies grows, raising questions about transparency, accountability, and the balance of power between tech developers and government agencies.

Market Implications and Investor Concerns

The conversation around AI and its use in military contexts can also impact market perceptions. Companies involved in AI development, such as OpenAI, may experience fluctuations in investor sentiment based on their perceived ethical stance and the potential risks associated with their technologies. Investors are increasingly evaluating companies not only on their financial performance but also on their ethical practices and the societal impacts of their products.

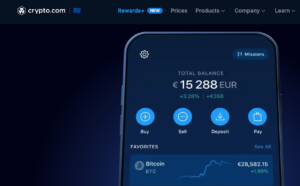

For instance, the developments in AI have been closely watched by the cryptocurrency market, where digital assets like Bitcoin ($BTC) and Ethereum ($ETH) have seen increased integration with AI technologies. As AI continues to advance, it could potentially influence blockchain and cryptocurrency technologies, offering new opportunities and challenges for market participants.

The broader tech market remains attentive to how companies like OpenAI navigate these complex issues. The integration of AI into various sectors, including defense, is likely to continue driving discussions about the ethical responsibilities of tech companies and the role of regulation in ensuring responsible use.

Conclusion and Future Outlook

As AI technology becomes more embedded in daily life and strategic national initiatives, companies like OpenAI find themselves at the crossroads of innovation and ethical responsibility. Sam Altman’s comments serve as a reminder of the limitations tech companies face in controlling the end use of their innovations, especially when it comes to military applications.

Going forward, the industry will need to continue developing partnerships with government entities to ensure that AI technologies are deployed ethically and effectively. This will involve ongoing dialogue and the establishment of clear ethical guidelines. For investors and market participants, understanding these dynamics will be crucial in evaluating the long-term prospects of companies leading the AI charge.

Comments are closed.